- Published on

Benchmarking Azure Table Storage operations in ASP.NET Application

Table of Contents

I conducted this benchmark to determine the time it takes to read and write data in Azure Table Storage, in order to get a basic sense of latencies with which Azure Table Storage operates.

Following three operations were benchmarked:

- Write

- Point Read

- Table Scan

If you don't know about any of these operations, I would encourage you to read Type of Queries in Azure Table Storage.

How I performed the Benchmark?

I developed an ASP.NET application that included endpoints for executing read and write operations on Azure Table Storage, a common scenario when working with Table Storage. To record the latencies involved in these operations, I utilized custom metrics provided by Application Insights.

Please refer to How to collect custom metrics using Application Insights if you are unfamiliar with collecting custom metrics.

app.MapPost("/AddProduct", async (TableClient client, ProductGenerator pg, TelemetryClient tc) =>

{

var product = pg.Generate()[0];

var payloadSize = Encoding.UTF8.GetByteCount(JsonSerializer.Serialize(product));

tc.GetMetric($"AddProduct.PayloadSize").TrackValue(payloadSize);

var s = Stopwatch.StartNew();

await client.AddEntityAsync(product);

s.Stop();

tc.GetMetric($"AddProduct.Duration").TrackValue(s.Elapsed.TotalMilliseconds);

return Results.Ok();

});

Next, I deployed the application using Azure App Services, and within the same region as the storage account.

After deploying the application, I used Bombardier to conduct benchmark tests. For each test, I sent concurrent HTTP requests for a duration of 1 minute.

And after each test, I collected the results from Application Insights.

Technical and Environment details

| Property | Value |

|---|---|

| .NET version | 7 |

| Azure App Service | P2V3 (4 Cores, 16GB RAM) |

| Azure App Service Instance Count | 1 |

| Azure.Data.Tables version | 12.8.1 |

| Azure Storage | Standard v2 |

| Region | Central India |

You can find the the complete source code of the application on my Github.

Write Operation

As you observed in the preceding code snippet, I used a ProductGenerator service for entity generation. This service creates a Product entity with random data, and then I used TableClient to insert this entity.

I executed the write-tests using different arguments and the parameter subspace is outlined below:

| Parameters | Values |

|---|---|

| Concurrent Connections | 50, 250 |

| Payload Size | 0.5 KB, 1 KB |

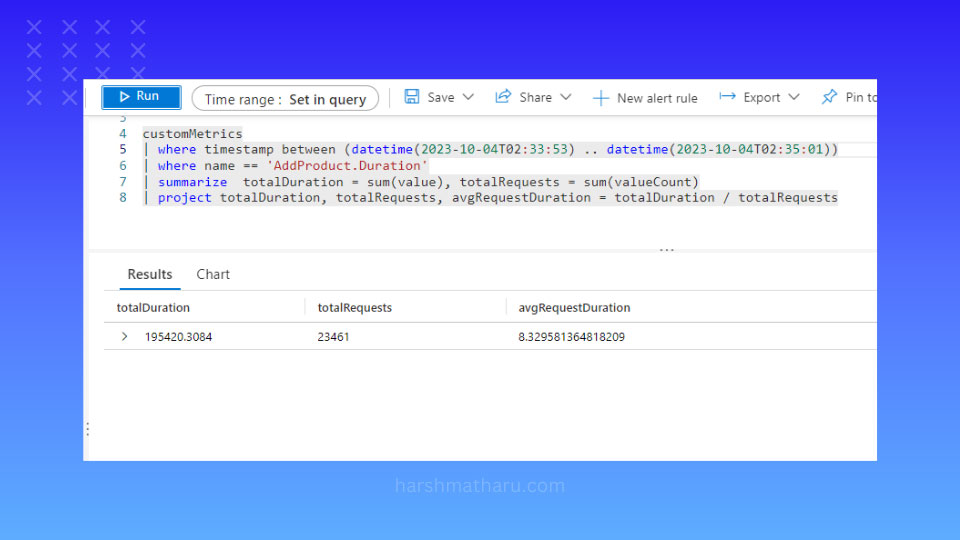

And this is the benchmark result for the write operations.

| Payload Size (0.5 KB) | Payload Size (1 KB) | |

|---|---|---|

| 50 Concurrent Connections | 8.3 ms | 7.5 ms |

| 250 Concurrent Connections | 9.9 ms | 8.5 ms |

These results showcase the remarkable speed of Azure Table Storage, with latency consistently in the single-digit milliseconds range. Interestingly, there isn't a substantial difference in the results across various parameters. While using a 1KB payload seemed to yield slightly shorter latencies, but most probably, that could be due to random chance.

Point Read

Due to lack of substantial difference between the results across different parameter combinations, I executed read tests only for 250 concurrent users and 1 KB payload size.

Since the point read is the most efficient form of query, I expected relatively low latency and indeed, it was:

The average duration for the point read was 6.5 ms.

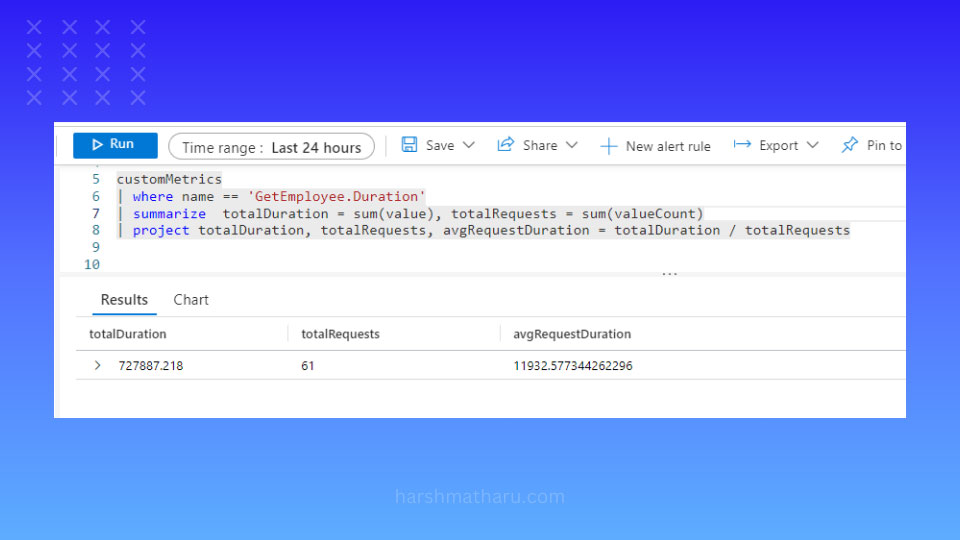

Table Scan

I was more interested in evaluating the duration of table scans, which is notably the slowest type of query in Table Storage, and is generally discouraged for use. The warning proved valid as the average duration for a table scan resulted in 11,932 ms, nearly 12 seconds.

In this result, a table scan appeared to be approximately 1800 times slower than a point query under exact test conditions.

It's worth noting that the time taken for a table scan is directly proportional to the size of the table; the larger the table, the more time it consumes. In this particular case, the table contained only 60,000 entities.

As a general practice, it's advisable to avoid table scans due to their significant performance overhead.

Conclusion

Azure Table Storage is relatively fast data store offering a single-digit millisecond latency in most of the operations. This exceptional speed makes it an attractive choice for application which requires low latencies.

However, table scans particularly cause significant performance overhead and should be avoided.